Don't Detect, Just Correct: Can LLMs Defuse Deceptive Patterns Directly?

Late-Breaking Work at ACM CHI '25

by René Schäfer, Paul Preuschoff, Rene Niewianda, Sophie Hahn, Kevin Fiedler, and Jan Borchers

Abstract

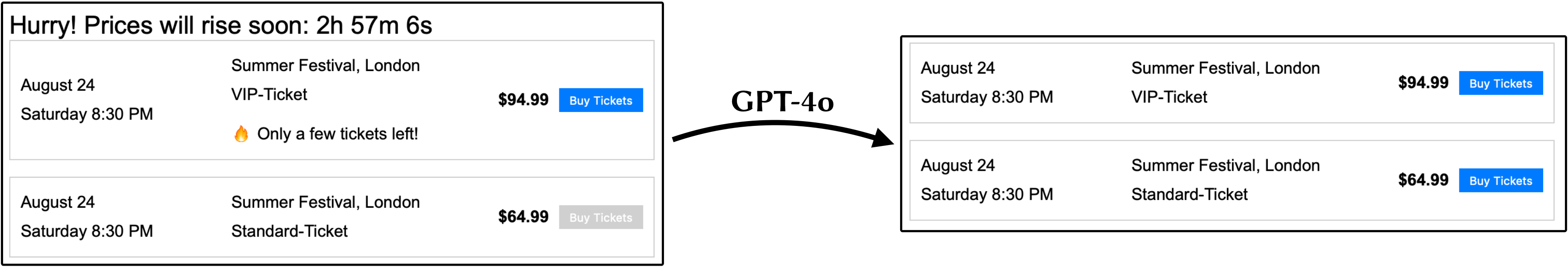

Deceptive (or dark) patterns, UI design strategies manipulating users against their best interests, have become widespread. We introduce an idea for technical countermeasures against such patterns. It feeds the HTML code of web elements that may contain deceptive patterns into a large language model (LLM) and iteratively prompts it to make these elements less manipulative. We evaluated our approach with GPT-4o and self-created web elements. The most consistent results appeared after three iterations, with 91% of deceptive elements being less manipulative and 96% not more manipulative than originally. We contribute our minimal and improved prompts and a labeled dataset of all 2,600 redesigns with the LLM's justifications for its changes. We also performed preliminary tests on real websites to show and discuss the feasibility of our approach in the field. Our findings suggest that LLMs can defuse certain deceptive patterns without prior model training, promising a major advance in fighting these manipulations.

Data

We provide all our data OSF: http://osf.io/tgrw9/

Publications

- René Schäfer, Paul Preuschoff, Rene Niewianda, Sophie Hahn, Kevin Fiedler and Jan Borchers. Don't Detect, Just Correct: Can LLMs Defuse Deceptive Patterns Directly?. In Extended Abstracts of the 2025 CHI Conference on Human Factors in Computing Systems, CHI EA '25, pages 11, Association for Computing Machinery, April 2025.